Deepfakes and wire fraud: How to protect transactions from digital impersonators

What are deepfakes and how are they being used to commit wire fraud?

Tom Cronkright

5 minutes

Artificial Intelligence

Jun 24, 2024

Apr 22, 2026

Key Takeaways

- Deepfakes are computer-generated photos, videos, or audio that mimic authentic content. They are often used to impersonate real people without their permission

- Deepfakes can impersonate trusted parties to a transaction. This allows criminals to manipulate wire transfer instructions and divert funds to their own accounts

- According to the 2026 State of Wire Fraud Report, BEC attacks have surged 1,760% since generative AI tools became widely available — and deepfake-enabled impersonation is driving that threat deeper into real estate transactions.

- Use identity verification software that includes liveness detection. This confirms identities, helps combat deepfakes, and prevents wire fraud

- Train your team to recognize deepfake warning signs. Look for unnatural facial expressions, inconsistent lighting, and odd speech patterns

In early 2024, a finance worker at British engineering firm Arup wired $25.6 million to criminals after joining a video call where every participant, including the company's CFO, turned out to be a deepfake.

The employee had initially suspected a phishing email, but put aside his doubts because the people on the call looked and sounded exactly like colleagues he recognized. The funds were sent across 15 separate transactions before the fraud was discovered.

Deepfakes are the latest threat to catch the attention of federal lawmakers. They've reignited public debate about privacy and consent. But the use of deepfakes for committing financial crimes still flies under the radar for most title and escrow professionals.

Fraudsters are counting on that gap. According to our research, 60.1% of title professionals say fraud attempts have increased in frequency over the past year — and 72.6% report those attempts are becoming more sophisticated.

In this guide, you'll learn how deepfakes enable wire fraud schemes targeting title companies, law firms, and their clients. We'll cover how to identify computer-generated impersonation attempts and proven strategies to protect your transactions.

What is deepfake technology? How digital impersonation works

Deepfakes are computer-generated photos, videos, or audio that mimic authentic content. They are often used to impersonate real people without their permission. The technology works by ingesting original audiovisual data. It then generates avatars matching the look, facial expressions, tone, style, and gestures of a real person.

Two capabilities make deepfakes particularly dangerous in a real estate context:

- Face-swapping generates a digital version of a real person's face and applies it to another body in a video or photo. A fraudster doesn't need to look like their target — the software handles the rest.

- Speech synthesis interprets text and generates speech in the voice of a real person. If someone has attended your webinars, appeared in listing videos, or posted on social media, there's likely enough source material online to clone a convincing version of their voice.

Unlike simple photo filters, high-quality deepfakes are difficult to recognize and are built with malicious intent. They require large amounts of source material — thousands of recordings or images of the target.

This is why fraudsters typically focus on people with a large online presence: business executives, real estate professionals, and anyone whose face and voice appear regularly in public content.

How criminals use deep fake AI for wire fraud

There are positive uses for this technology, such as dubbing media in other languages. But criminals often use it for social engineering — manipulating people to gain trust and enable crime. Deepfakes impersonate trusted parties to change wire instructions and divert funds to a criminal's bank account.

Here are the four most common attack methods targeting real estate transactions:

1. Video call impersonation

A fraudster can use deepfake technology to imitate a lender or real estate professional on a video conference call. They appear as a legitimate party and can then instruct a property seller to wire their mortgage payoff to the fraudster's bank. Scammers also use this method to imitate sellers, convincing title companies to send buyer funds to an untraceable account.

2. Voice cloning and phone fraud

Deepfakes have reached a point where even experienced professionals cannot always spot the difference between a computer-generated voice and the real thing.

In the Arup case, fraudsters used deepfake voices and face-swapping to imitate multiple colleagues in a single video meeting — convincing a finance worker to send $25.6 million across 15 transactions.

The implication for title professionals is direct: if your team relies on recognizing a familiar voice to confirm wire instructions, that process is no longer a reliable safeguard.

3. Combined phishing and deepfake attacks

SIM-swap technology allows fraudsters to disguise their real phone numbers and manipulate caller ID, making it appear they are calling from a trusted business.

When a fraudster calls from a familiar number with a recognizable voice, even experienced professionals can fall victim. This is why verifying identities through a single channel — even a phone callback — is no longer enough on its own.

4. Biometric system bypass

Deepfakes can fool basic identity verification tools. Certain software collects user biometrics from facial scans to confirm identity before authorizing transactions. Fraudsters use face-swapping during these scans to pass as authentic users — and once logged in, they can authorize wire transfers to their own accounts.

This is exactly why liveness detection — technology that confirms a live human is present rather than a recording or generated image — has become a baseline requirement for identity verification in real estate.

The table below shows how deepfake technology has changed traditional fraud methods:

These methods are not theoretical. Our recent data found that BEC attacks have increased by an astonishing 1,760% since generative AI tools became common. Now, 57.5% of title professionals face suspicious activity at least once every three months. Generative AI is making these attacks faster, cheaper, and more convincing every month.

Warning signs: How to detect deepfake attempts in real-time

You can learn to spot the glitches in deepfakes. Recognizing these red flags during video calls can disrupt fraud before funds leave the account.

Visual detection clues to watch for:

- Some facial features lack definition or appear blurred

- Color, lighting, or texture appears inconsistent across a participant's face

- Gestures and facial expressions look unnatural, such as unblinking eyes

- The participant's face is familiar, but their clothing and background are unusual

- Lip movements do not perfectly align with speech

These visual artifacts are more noticeable on larger screens. When possible, review video calls on a full-size monitor rather than a phone.

Audio detection clues that signal a potential deepfake:

- The voice sounds convincing, but the speaker uses uncharacteristic speech patterns

- There are slight robotic undertones or unusual pauses between words

- Audio quality is inconsistent and does not match the video quality

- Background noise seems artificially generated

If anything sounds off, ask the speaker an unscripted question about a shared experience. Deepfakes can mimic appearances but cannot recall specific details from prior conversations.

Contextual red flags that should trigger immediate verification:

- Requests for wire transfers happen during unusual hours

- The person is reluctant to answer spontaneous questions about shared experiences

- They request to bypass normal verification procedures

- Technical issues conveniently prevent a closer examination of video quality

When limited source data is available, deepfake software cannot generate convincing impersonations. Your team can learn to spot these limitations — but detection alone is not a reliable defense. Technology-based verification is essential.

How to prevent deepfakes from compromising wire transfers: 9 proven strategies

The best defense against deepfake wire fraud requires a layered approach. You need to combine technology, training, and verification protocols. Here are 9 strategies your team can implement now.

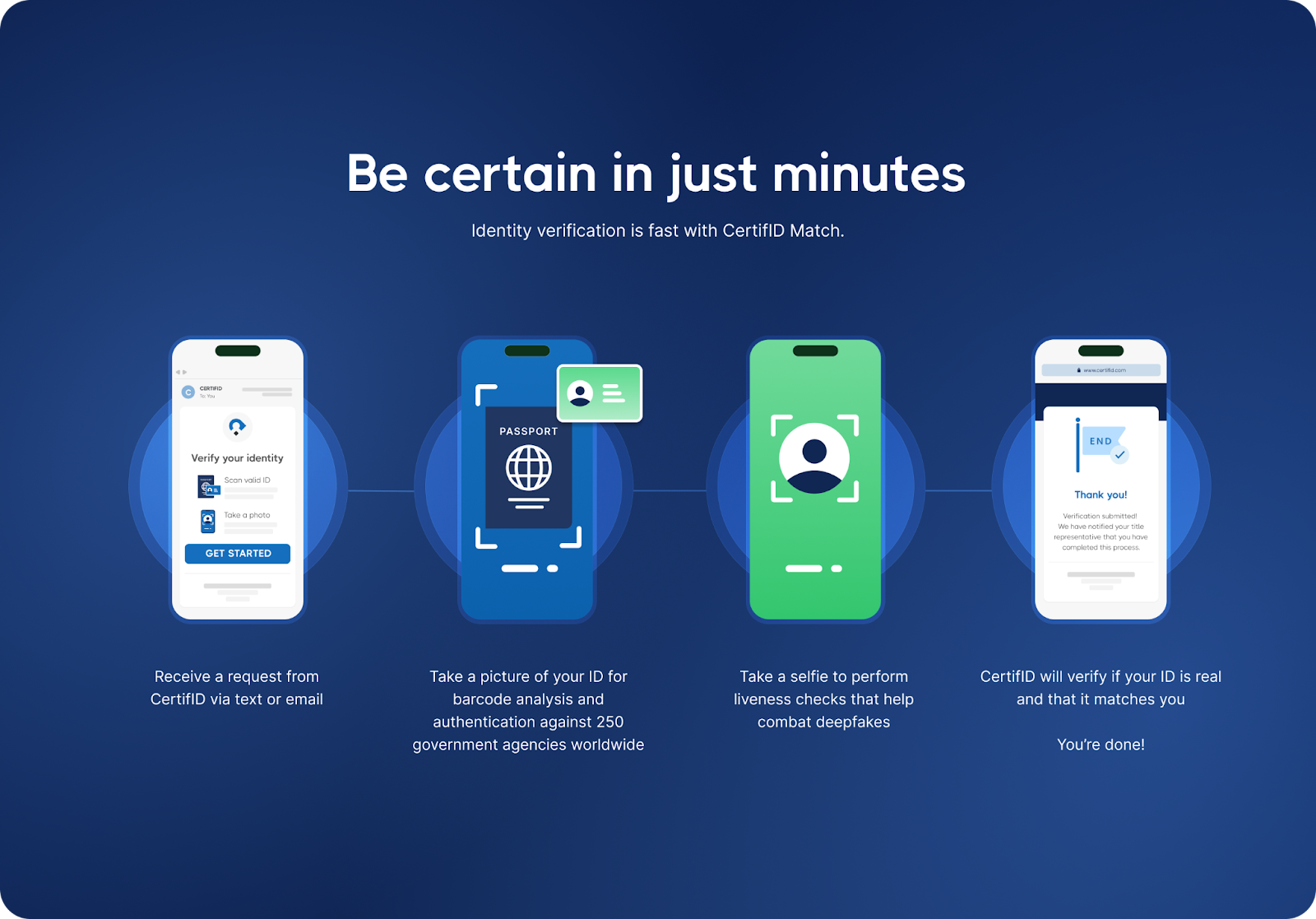

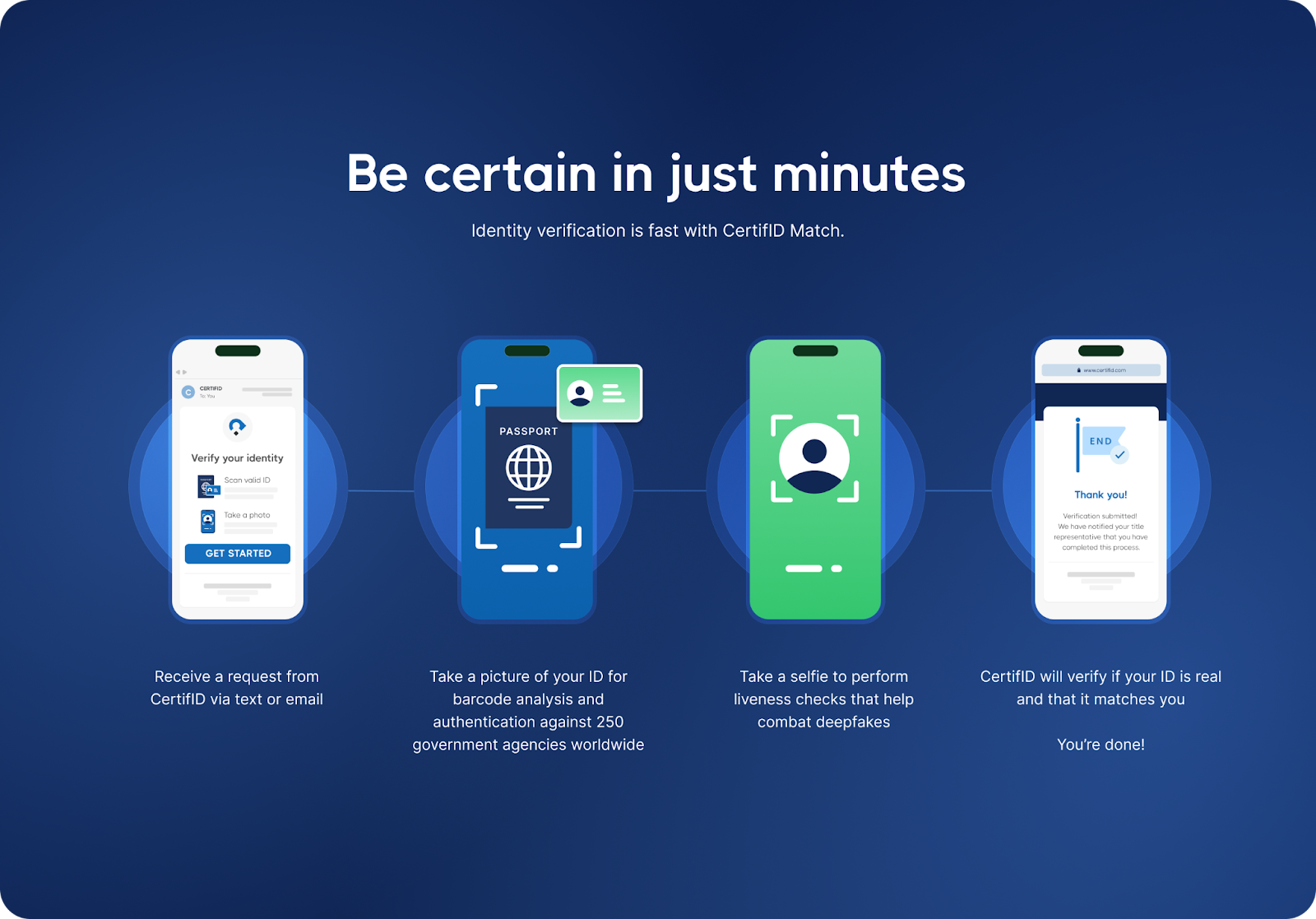

1. Use identity verification software with liveness detection

Use verification software that includes liveness detection — technology that confirms a genuine human is present and rejects photos, recordings, and computer-generated renderings.

CertifID Match helps combat deepfakes, SIM-swap, and phishing by analyzing over 150 fraud indicators in real-time.

It matches biometrics from a live selfie to a government-issued ID, cross-referencing results against databases from 250 government agencies worldwide.

2. Establish multi-channel verification protocols

Never rely on a single channel for wire transfer authorization. If you receive instructions via video call, confirm them through a secure platform. If you receive verbal instructions, require written confirmation through a tool that independently validates those details.

Every wire instruction change deserves a second check through a separate, verified channel — no exceptions and no shortcuts because you're under deadline pressure.

3. Create standardized verification procedures

Develop standardized procedures using independently verified contact information. When wire instructions change or you handle high-value transfers, always verify through contact details from your original files — not information provided in recent communications.

This matters especially for seller impersonation fraud involving vacant land, where fraudsters target absentee property owners who may not notice something is wrong until the deal has already closed.

4. Train your team on deepfake detection

Run regular training sessions to help staff recognize deepfake warning signs. Include examples of computer-generated content and practice scenarios where team members must identify potential fraud attempts on video calls.

According to CertifID data, once a company experiences at least one high-risk transaction, their rate of high-risk transactions rises to up to 6x higher than peer companies — meaning fraudsters actively target firms they've already probed.

5. Update social media privacy settings

Deepfakes require source material. The more audio and video of your team that exists publicly online, the easier it is to build a convincing impersonation.

Advise staff to tighten social media privacy settings and limit publicly available recordings. Remind clients to do the same. The less a fraudster has to work with, the harder the job becomes.

6. Use watermarking for shared images

Title companies and law firms routinely publish headshots, team videos, and testimonials — all of which can be scraped and fed into deepfake tools. Embedding invisible watermarks or metadata tags into these files creates a chain of provenance. If a fraudster generates a deepfake using your team's content, watermarked originals make it easier to prove manipulation occurred.

Encourage staff and clients to apply the same practice to any images or videos shared during a transaction.

7. Use real-time fraud monitoring

Use technology that monitors for fraud indicators during transactions. Purpose-built platforms can detect device spoofing, analyze behavioral patterns, and flag suspicious activity before funds leave the account. This is a layer of protection that runs behind the scenes, and your team doesn't have to remember to check it because the platform is checking automatically.

CertifID Match flags verification requests opened from known high-risk geolocations, adding another signal layer to your review process.

8. Require multi-factor verification for high-value transfers

For transactions above certain thresholds, require additional verification: biometric confirmation, secondary approvals, and extended review periods before funds move.

CertifID's per-file pricing means every identity, payoff, and wire instruction in a closing is verified and backed by up to $5 million in direct insurance — so you're never in the position of deciding which transactions are worth protecting and which ones aren't.

9. Develop incident response protocols

The worst time to figure out your response to suspected deepfake fraud is while it's happening. Create a clear written protocol in advance — who gets contacted, in what order, through what channels. Document everything as you go.

If funds have already moved, time is critical. CertifID's Fraud Recovery Services team works directly with federal law enforcement, including a partnership with the U.S. Secret Service. To date, CertifID has helped recover over $118.4 million in stolen funds for real estate businesses and consumers.

How to stop deepfakes: Choosing verification tools that work

Not all identity verification tools are built to catch deepfakes. Look for solutions that offer the following capabilities:

- Liveness detection: Distinguishes between live human faces and static photos, videos, or computer-generated content

- Device analysis: Verifies the device being used for identity confirmation to detect virtual machines or spoofed hardware

- Biometric matching: Compares facial features from a live selfie against government-issued identification documents

- Multi-database verification: Cross-references identity information against government databases and fraud monitoring systems

- Direct integration: Connects with title production software like SoftPro, ResWare, RamQuest, and Settlor to avoid workflow disruption

These features are a baseline for catching the current generation of deepfake fraud. CertifID was purpose-built for real estate fraud prevention and integrates all of these capabilities into a single platform.

Every verified transaction includes up to $5 million in direct, first-party insurance coverage per file. In 2025 alone, the platform blocked 1,018 fraudulent transactions and prevented approximately $283 million in losses. You can see the full list of integrations here.

What to do if you suspect a deepfake attack

If you suspect you are dealing with a deepfake attempt, speed matters. Follow these steps immediately:

- Stop the transaction immediately. Do not transfer any funds

- Document everything. Save screenshots, recordings, and communication logs

- Contact law enforcement. File a report with the FBI's Internet Crime Complaint Center (IC3)

- Notify your insurance carrier. Inform your legal counsel about the attempted fraud

- Inform all transaction parties. Use verified communication channels only

- Report to industry authorities. Contact state real estate commissions and title underwriters

- Preserve evidence. Keep records for potential prosecution and recovery efforts

- Review security protocols. Update your process to prevent future attempts

If funds have already been sent, time is critical. CertifID's Fraud Recovery Services team works with federal law enforcement — including a partnership with the U.S. Secret Service.

Depending on the case, it might be possible to freeze fraudulent accounts and recover stolen funds. To date, CertifID has helped recover over $118.4 million in stolen funds for real estate businesses and consumers.

The regulatory landscape: Pending legislation and compliance

Lawmakers have introduced the DEEPFAKES Accountability Act. As of 2026, this continues to work through the legislative process. Several states have enacted preliminary regulations requiring disclosure of computer-generated content in commercial contexts.

The framework is evolving quickly, and industry experts predict federal legislation by 2027 that could establish mandatory identity verification requirements for high-value financial transactions.

Title professionals should stay informed about developing requirements, and 85% of consumers are already willing to pay more to work with a real estate business that prioritizes their security from wire fraud.

Deepfakes and wire fraud: Protect your transactions now

Deepfake technology poses a growing risk to real estate transactions. You can keep your operations secure by being aware of this risk and following proper procedures. The best approach is to use layered defenses that include trained staff and specialized verification technology.

CertifID helps title companies and law firms to verify identities, confirm wire instructions, and protect mortgage payoffs. They use liveness detection to fight against deepfakes and provide up to $5 million in direct insurance for every file.

.png)

FAQ

How can I tell if someone is using a deepfake during a video call?

Look for visual inconsistencies like unnatural facial movements, lighting discrepancies, or audio that doesn't sync with lip movements. Ask spontaneous questions about shared experiences that a fraudster wouldn't know. If anything seems off, end the call and verify the person's identity through an independent channel.

What should I do if I receive wire instructions that seem suspicious?

Always verify wire instructions through a secure communication channel. Use contact information from your original files — never use contact details provided in recent communications.

Can deepfakes fool biometric security systems?

Basic biometric systems can be fooled. However, verification tools with liveness detection help combat computer-generated content and require proof of a live human presence before confirming an identity. When evaluating solutions, look for platforms that combine liveness detection with device analysis and multi-database cross-referencing.

What happens if deepfake fraud occurs during my transaction?

Report it to law enforcement and your insurance carrier immediately. Contact your wire fraud prevention provider's recovery team right away — the faster you act, the higher the chance of recovering funds. Financial losses may be recoverable with proper verification technology and insurance coverage.

How can I protect clients who aren't tech-savvy?

Educate them about verification procedures at the start of the transaction. Use technology that works behind the scenes without requiring complex client interaction. The best solutions send verification requests via text and email with a simple link — clients take a photo of their ID and a selfie, and they're done. No app downloads, no portals, no passwords.

Co-founder & Executive Chairman

Tom Cronkright is the Executive Chairman of CertifID, a technology platform designed to safeguard electronic payments from fraud. He co-founded the company in response to a wire fraud he experienced and the rising instances of real estate wire fraud. He also serves as the CEO of Sun Title, a leading title agency in Michigan. Tom is a licensed attorney, real estate broker, title insurance producer and nationally recognized expert on cybersecurity and wire fraud.

Key Takeaways

- Deepfakes are computer-generated photos, videos, or audio that mimic authentic content. They are often used to impersonate real people without their permission

- Deepfakes can impersonate trusted parties to a transaction. This allows criminals to manipulate wire transfer instructions and divert funds to their own accounts

- According to the 2026 State of Wire Fraud Report, BEC attacks have surged 1,760% since generative AI tools became widely available — and deepfake-enabled impersonation is driving that threat deeper into real estate transactions.

- Use identity verification software that includes liveness detection. This confirms identities, helps combat deepfakes, and prevents wire fraud

- Train your team to recognize deepfake warning signs. Look for unnatural facial expressions, inconsistent lighting, and odd speech patterns

In early 2024, a finance worker at British engineering firm Arup wired $25.6 million to criminals after joining a video call where every participant, including the company's CFO, turned out to be a deepfake.

The employee had initially suspected a phishing email, but put aside his doubts because the people on the call looked and sounded exactly like colleagues he recognized. The funds were sent across 15 separate transactions before the fraud was discovered.

Deepfakes are the latest threat to catch the attention of federal lawmakers. They've reignited public debate about privacy and consent. But the use of deepfakes for committing financial crimes still flies under the radar for most title and escrow professionals.

Fraudsters are counting on that gap. According to our research, 60.1% of title professionals say fraud attempts have increased in frequency over the past year — and 72.6% report those attempts are becoming more sophisticated.

In this guide, you'll learn how deepfakes enable wire fraud schemes targeting title companies, law firms, and their clients. We'll cover how to identify computer-generated impersonation attempts and proven strategies to protect your transactions.

What is deepfake technology? How digital impersonation works

Deepfakes are computer-generated photos, videos, or audio that mimic authentic content. They are often used to impersonate real people without their permission. The technology works by ingesting original audiovisual data. It then generates avatars matching the look, facial expressions, tone, style, and gestures of a real person.

Two capabilities make deepfakes particularly dangerous in a real estate context:

- Face-swapping generates a digital version of a real person's face and applies it to another body in a video or photo. A fraudster doesn't need to look like their target — the software handles the rest.

- Speech synthesis interprets text and generates speech in the voice of a real person. If someone has attended your webinars, appeared in listing videos, or posted on social media, there's likely enough source material online to clone a convincing version of their voice.

Unlike simple photo filters, high-quality deepfakes are difficult to recognize and are built with malicious intent. They require large amounts of source material — thousands of recordings or images of the target.

This is why fraudsters typically focus on people with a large online presence: business executives, real estate professionals, and anyone whose face and voice appear regularly in public content.

How criminals use deep fake AI for wire fraud

There are positive uses for this technology, such as dubbing media in other languages. But criminals often use it for social engineering — manipulating people to gain trust and enable crime. Deepfakes impersonate trusted parties to change wire instructions and divert funds to a criminal's bank account.

Here are the four most common attack methods targeting real estate transactions:

1. Video call impersonation

A fraudster can use deepfake technology to imitate a lender or real estate professional on a video conference call. They appear as a legitimate party and can then instruct a property seller to wire their mortgage payoff to the fraudster's bank. Scammers also use this method to imitate sellers, convincing title companies to send buyer funds to an untraceable account.

2. Voice cloning and phone fraud

Deepfakes have reached a point where even experienced professionals cannot always spot the difference between a computer-generated voice and the real thing.

In the Arup case, fraudsters used deepfake voices and face-swapping to imitate multiple colleagues in a single video meeting — convincing a finance worker to send $25.6 million across 15 transactions.

The implication for title professionals is direct: if your team relies on recognizing a familiar voice to confirm wire instructions, that process is no longer a reliable safeguard.

3. Combined phishing and deepfake attacks

SIM-swap technology allows fraudsters to disguise their real phone numbers and manipulate caller ID, making it appear they are calling from a trusted business.

When a fraudster calls from a familiar number with a recognizable voice, even experienced professionals can fall victim. This is why verifying identities through a single channel — even a phone callback — is no longer enough on its own.

4. Biometric system bypass

Deepfakes can fool basic identity verification tools. Certain software collects user biometrics from facial scans to confirm identity before authorizing transactions. Fraudsters use face-swapping during these scans to pass as authentic users — and once logged in, they can authorize wire transfers to their own accounts.

This is exactly why liveness detection — technology that confirms a live human is present rather than a recording or generated image — has become a baseline requirement for identity verification in real estate.

The table below shows how deepfake technology has changed traditional fraud methods:

These methods are not theoretical. Our recent data found that BEC attacks have increased by an astonishing 1,760% since generative AI tools became common. Now, 57.5% of title professionals face suspicious activity at least once every three months. Generative AI is making these attacks faster, cheaper, and more convincing every month.

Warning signs: How to detect deepfake attempts in real-time

You can learn to spot the glitches in deepfakes. Recognizing these red flags during video calls can disrupt fraud before funds leave the account.

Visual detection clues to watch for:

- Some facial features lack definition or appear blurred

- Color, lighting, or texture appears inconsistent across a participant's face

- Gestures and facial expressions look unnatural, such as unblinking eyes

- The participant's face is familiar, but their clothing and background are unusual

- Lip movements do not perfectly align with speech

These visual artifacts are more noticeable on larger screens. When possible, review video calls on a full-size monitor rather than a phone.

Audio detection clues that signal a potential deepfake:

- The voice sounds convincing, but the speaker uses uncharacteristic speech patterns

- There are slight robotic undertones or unusual pauses between words

- Audio quality is inconsistent and does not match the video quality

- Background noise seems artificially generated

If anything sounds off, ask the speaker an unscripted question about a shared experience. Deepfakes can mimic appearances but cannot recall specific details from prior conversations.

Contextual red flags that should trigger immediate verification:

- Requests for wire transfers happen during unusual hours

- The person is reluctant to answer spontaneous questions about shared experiences

- They request to bypass normal verification procedures

- Technical issues conveniently prevent a closer examination of video quality

When limited source data is available, deepfake software cannot generate convincing impersonations. Your team can learn to spot these limitations — but detection alone is not a reliable defense. Technology-based verification is essential.

How to prevent deepfakes from compromising wire transfers: 9 proven strategies

The best defense against deepfake wire fraud requires a layered approach. You need to combine technology, training, and verification protocols. Here are 9 strategies your team can implement now.

1. Use identity verification software with liveness detection

Use verification software that includes liveness detection — technology that confirms a genuine human is present and rejects photos, recordings, and computer-generated renderings.

CertifID Match helps combat deepfakes, SIM-swap, and phishing by analyzing over 150 fraud indicators in real-time.

It matches biometrics from a live selfie to a government-issued ID, cross-referencing results against databases from 250 government agencies worldwide.

2. Establish multi-channel verification protocols

Never rely on a single channel for wire transfer authorization. If you receive instructions via video call, confirm them through a secure platform. If you receive verbal instructions, require written confirmation through a tool that independently validates those details.

Every wire instruction change deserves a second check through a separate, verified channel — no exceptions and no shortcuts because you're under deadline pressure.

3. Create standardized verification procedures

Develop standardized procedures using independently verified contact information. When wire instructions change or you handle high-value transfers, always verify through contact details from your original files — not information provided in recent communications.

This matters especially for seller impersonation fraud involving vacant land, where fraudsters target absentee property owners who may not notice something is wrong until the deal has already closed.

4. Train your team on deepfake detection

Run regular training sessions to help staff recognize deepfake warning signs. Include examples of computer-generated content and practice scenarios where team members must identify potential fraud attempts on video calls.

According to CertifID data, once a company experiences at least one high-risk transaction, their rate of high-risk transactions rises to up to 6x higher than peer companies — meaning fraudsters actively target firms they've already probed.

5. Update social media privacy settings

Deepfakes require source material. The more audio and video of your team that exists publicly online, the easier it is to build a convincing impersonation.

Advise staff to tighten social media privacy settings and limit publicly available recordings. Remind clients to do the same. The less a fraudster has to work with, the harder the job becomes.

6. Use watermarking for shared images

Title companies and law firms routinely publish headshots, team videos, and testimonials — all of which can be scraped and fed into deepfake tools. Embedding invisible watermarks or metadata tags into these files creates a chain of provenance. If a fraudster generates a deepfake using your team's content, watermarked originals make it easier to prove manipulation occurred.

Encourage staff and clients to apply the same practice to any images or videos shared during a transaction.

7. Use real-time fraud monitoring

Use technology that monitors for fraud indicators during transactions. Purpose-built platforms can detect device spoofing, analyze behavioral patterns, and flag suspicious activity before funds leave the account. This is a layer of protection that runs behind the scenes, and your team doesn't have to remember to check it because the platform is checking automatically.

CertifID Match flags verification requests opened from known high-risk geolocations, adding another signal layer to your review process.

8. Require multi-factor verification for high-value transfers

For transactions above certain thresholds, require additional verification: biometric confirmation, secondary approvals, and extended review periods before funds move.

CertifID's per-file pricing means every identity, payoff, and wire instruction in a closing is verified and backed by up to $5 million in direct insurance — so you're never in the position of deciding which transactions are worth protecting and which ones aren't.

9. Develop incident response protocols

The worst time to figure out your response to suspected deepfake fraud is while it's happening. Create a clear written protocol in advance — who gets contacted, in what order, through what channels. Document everything as you go.

If funds have already moved, time is critical. CertifID's Fraud Recovery Services team works directly with federal law enforcement, including a partnership with the U.S. Secret Service. To date, CertifID has helped recover over $118.4 million in stolen funds for real estate businesses and consumers.

How to stop deepfakes: Choosing verification tools that work

Not all identity verification tools are built to catch deepfakes. Look for solutions that offer the following capabilities:

- Liveness detection: Distinguishes between live human faces and static photos, videos, or computer-generated content

- Device analysis: Verifies the device being used for identity confirmation to detect virtual machines or spoofed hardware

- Biometric matching: Compares facial features from a live selfie against government-issued identification documents

- Multi-database verification: Cross-references identity information against government databases and fraud monitoring systems

- Direct integration: Connects with title production software like SoftPro, ResWare, RamQuest, and Settlor to avoid workflow disruption

These features are a baseline for catching the current generation of deepfake fraud. CertifID was purpose-built for real estate fraud prevention and integrates all of these capabilities into a single platform.

Every verified transaction includes up to $5 million in direct, first-party insurance coverage per file. In 2025 alone, the platform blocked 1,018 fraudulent transactions and prevented approximately $283 million in losses. You can see the full list of integrations here.

What to do if you suspect a deepfake attack

If you suspect you are dealing with a deepfake attempt, speed matters. Follow these steps immediately:

- Stop the transaction immediately. Do not transfer any funds

- Document everything. Save screenshots, recordings, and communication logs

- Contact law enforcement. File a report with the FBI's Internet Crime Complaint Center (IC3)

- Notify your insurance carrier. Inform your legal counsel about the attempted fraud

- Inform all transaction parties. Use verified communication channels only

- Report to industry authorities. Contact state real estate commissions and title underwriters

- Preserve evidence. Keep records for potential prosecution and recovery efforts

- Review security protocols. Update your process to prevent future attempts

If funds have already been sent, time is critical. CertifID's Fraud Recovery Services team works with federal law enforcement — including a partnership with the U.S. Secret Service.

Depending on the case, it might be possible to freeze fraudulent accounts and recover stolen funds. To date, CertifID has helped recover over $118.4 million in stolen funds for real estate businesses and consumers.

The regulatory landscape: Pending legislation and compliance

Lawmakers have introduced the DEEPFAKES Accountability Act. As of 2026, this continues to work through the legislative process. Several states have enacted preliminary regulations requiring disclosure of computer-generated content in commercial contexts.

The framework is evolving quickly, and industry experts predict federal legislation by 2027 that could establish mandatory identity verification requirements for high-value financial transactions.

Title professionals should stay informed about developing requirements, and 85% of consumers are already willing to pay more to work with a real estate business that prioritizes their security from wire fraud.

Deepfakes and wire fraud: Protect your transactions now

Deepfake technology poses a growing risk to real estate transactions. You can keep your operations secure by being aware of this risk and following proper procedures. The best approach is to use layered defenses that include trained staff and specialized verification technology.

CertifID helps title companies and law firms to verify identities, confirm wire instructions, and protect mortgage payoffs. They use liveness detection to fight against deepfakes and provide up to $5 million in direct insurance for every file.

.png)

Co-founder & Executive Chairman

Tom Cronkright is the Executive Chairman of CertifID, a technology platform designed to safeguard electronic payments from fraud. He co-founded the company in response to a wire fraud he experienced and the rising instances of real estate wire fraud. He also serves as the CEO of Sun Title, a leading title agency in Michigan. Tom is a licensed attorney, real estate broker, title insurance producer and nationally recognized expert on cybersecurity and wire fraud.

Sign up for The Wire to join the conversation.